Inception Labs presents diffusion large language models

The AI startup Inception Labs announced a new generation of large language models (LLMs) with the Mercury family. The Mercury models are diffusion LLMs (dLLMs).

The details

- The new dLLMs no longer write text sequentially from left to right. Instead, the models generate text like an image. This approach is commonly used in image and video generation models.

- According to Inception Labs, the models are up to 10x faster than current state-of-the-art LLMs. The new models process over 1000 tokens per second on NVIDIA H100 chips.

- You can test the code generation model, Mercury Coder, for free at chat.inceptionlabs.ai. It is the first publicly available dLLM of Inception Labs.

- According to Inception Labs, Mercury Coder performs excellently in standard coding tests, outperforming models like GPT-4o Mini and Claude 3.5 Haiku, and is up to 10x faster.

Our thoughts

Diffusion large language models represent a paradigm shift in LLM development. Diffusion models constantly improve their results, allowing them to fix mistakes and reduce hallucinations. The application of diffusion to discrete data such as text and code has not been successful until this publication.

We are excited about this approach because it reduces costs and speeds up the models.

More information: 🔗 Inception Labs News

Magic AI tool

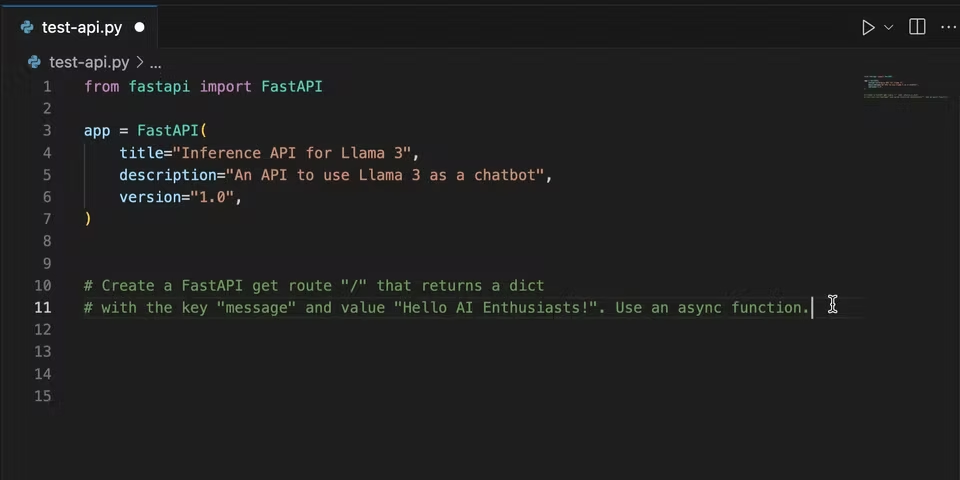

Did you know you can run advanced LLMs like Llama 3, Phi 3, or Codestral locally on your computer? The VSCode plugin continue, and the open-source tool Ollama makes it happen.

In our blog article, you learn how to create a local AI coding assistant. After reading our article, you can chat with your codebase in VSCode and can use functions like autocompletion:

👉🏽 Learn more in our step-by-step guide!

Hand-picked articles

- Dive into Investment Research - A Beginner’s Guide to the OpenBB Platform

- Build a Local RAG App to Chat with Earnings Reports

- Visualize financial data with beautiful Sankey diagrams

😀 Do you enjoy our content? If so, why not support us with a small financial contribution? As a supporter, you can comment on and like newsletter editions (e-mail version).